When it comes to understanding Alexa design, it’s important to start from the beginning. Amazon’s cloud-based voice service, Alexa, is seemingly everywhere these days. There are already roughly 4.2 billion devices in use around the globe today, and experts anticipate a whopping 8 billion digital voice assistants will be responding to user commands, providing information and assisting in the control of other connected electronics by 2023.

The “brain” behind millions of connected devices from Amazon and third-party device manufacturers, Alexa enables consumers to automatically control their devices with their voice. From checking the news and listening to music to playing a game and so much more, Alexa allows people to access content, services and more than 100,000 “skills” just by using their voice.

So, what are the steps for designing an Alexa voice experience?

Contents

Steps for Alexa System Design

Alexa Connectivity

Although Alexa can support a small number of locally processed commands, to take advantage of Alexa’s full capabilities, one must provide internet connectivity. Amazon offers a range of reference designs to help you build your device. The connectivity requirements are the same as for a regular IoT device, however, when you want to add voice capabilities to a connected device, capturing quality audio adds complexity to the design process.

Capturing — and Processing — Quality Audio

While it’s easy enough to slap a microphone on a product to capture audio, we’ve all been on enough video conferences in the past year to realize that not all setups capture quality audio. To that end, there are also some mechanical design and acoustic components that need to be addressed to capture quality audio.

With just one microphone, capturing audio of high quality can be a challenge. Other considerations include the number of microphones a device needs, the distance between those microphones, how the microphones are bonded to the product’s case or enclosure, vibration, etc. In order to create an engaging voice experience, the ability to detect, recognize and capture quality audio is crucial.

For effective voice capture and detection/recognition, devices need to be able to process the audio to enhance its quality. A good audio capture solution, such as a microphone array, uses multiple microphones and digital signal processing (DSP) techniques like beamforming to focus on or reject particular sources of sound. With DSP, a product’s microphones pick up sound waves coming from different directions and can differentiate between audio sources, filter out certain sounds and select the intended sound signal, thereby enhancing the audio’s quality.

Sending Audio to Alexa Wake Word Engine

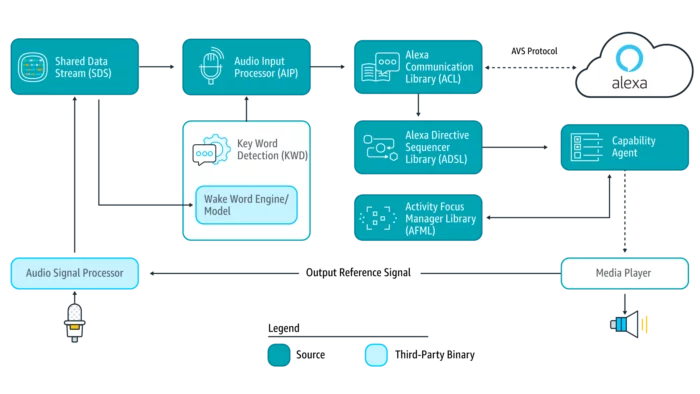

Once the audio is captured and cleaned, it is fed to a wake word engine (WWE) that is monitoring the audio stream for a predefined keyword, such as “Alexa.” When your WWE detects the wake word, the device starts streaming the audio input to the Alexa Voice Service (AVS) server in the cloud for further speech recognition processing. There, the audio is identified and transcribed to text before natural language processing kicks in to help determine the commands from the user-uttered sentences.

By converting a user’s command into a set of instructions, the product can then perform the task the user asked it to do if its software has been programmed to do so. Fortunately, when it comes to Alexa, Amazon typically provides a development kit and software libraries that enable devices to process audio inputs and triggers, establish persistent connections with AVS and handle all Alexa interactions.

As an example, if a user asks an Alexa device to play a specific song, the device will start by detecting the Alexa wake word. Once the wake word is detected, the device will send a recording of the wake word to the AVS server for confirmation (this helps minimize the rate of false positive) and set up an audio path to transmit the rest of the user command. AVS processes that audio input and sends a “directive” back to the device. From there, the device begins playing that song.

Credit: Amazon Alexa (https://developer.amazon.com/en-US/docs/alexa/avs-device-sdk/overview.html)

Alexa Design Compliance Testing and Certification

Once you’ve developed the device’s software, have a prototype up and running, you need to start compliance testing. Since customers expect a high-quality and consistent experience across all Alexa products, Amazon won’t let you just slap an Amazon Alexa logo on your device if it’s not up to par.

Consequently, there is a series of tests any product has to pass to be certified. First, is the self-testing phase. At Cardinal Peak, we have an Alexa test lab setup that allows us to test our own devices before submitting them to Amazon for further testing.

Self-test categories for Alexa design include:

- Functional — Does your Alexa integration function the way customers expect?

- UX (User Experience) — Is it easy for a customer to interact with your product using natural voice control?

- Security — Have you implemented all reasonable security measures to prevent unauthorized access to the Alexa Service?

- Acoustic — Does the wake word recognition performs as expected and does your device handle user requests appropriately?

- Music — Each music service provider your device supports (e.g., Amazon Music, iHeartRadio, Kindle books, Pandora, Spotify, etc.) has its own set of requirements that devices need to be tested and certified against.

After passing the myriad self-tests required, completed checklists need to be verified by Amazon or one of Amazon’s authorized third-party labs. If your self-testing results receive approval, it’s time to submit your device for certification testing. All devices must go through the Amazon testing and certification process before receiving approval for launch.

A process that usually takes one to three months, the Amazon testing team runs tests to verify device functionality, product requirements and that the device provides a quality experience for customers. If your product passes all of the Amazon tests, it becomes eligible for certification and approval for launch.

Additional Amazon Alexa Design Considerations

Beyond connectivity, acoustics and mechanical design concerns, it’s important to also consider the following aspects when designing your Alexa device:

- How users will use your product — Who is your target audience and what problem are you trying to solve for them? While it’s impossible to predict how users will use your product, understanding how they might use it will help you create a design that meets their needs.

- The environment(s) in which the product will be used — Where will the device be used? A voice assistant that sits in your home might have to deal with sounds from the radio, TV, kids running around or pets, while in-vehicle voice assistants contend with vibration noise from the road. And wearable devices open an entirely different avenue of concerns, including weather, where on the body the device will be worn and the ability to effectively capture audio/detect the wake word while the product is moving.

- Power source — Will your device be battery powered or plugged in? If it’s wired, then power is less of an issue. Wired solutions are preferred when the device is stationary and responds to “events” like notifications. However, many users prefer to use their voice assistants on the go, and mobile or unattached devices present an additional challenge because they need to regularly sleep until user interaction occurs. Fortunately, if a device is battery powered, there are chips available, including the Syntiant Neural Decision Processor, that enable ultralow-power always-on wake word detection.

- Audio processing chain — Since a lot of devices have both audio and voice functionality, understanding what the audio processing chain will look like is important to manage voice interactions versus the normal audio the device plays. There’s nothing worse than listening to music and issuing a command only to have your device fail to interact.

- Cost — A critical but occasionally overlooked aspect of the design process: budget. Beyond development costs, there are also costs associated with the bill of materials (BOM), silicon real estate, physical size, time to market and more that should be factored into your design process.

When it’s all said and done, every Alexa-enabled voice product faces different challenges. While power is not an issue if the device is plugged in and DSP is not a problem in a quiet environment, product designers need to understand the solution, the market they’re targeting and the challenges associated with that environment to effectively add Alexa to their device.

Cardinal Peak is an Amazon-approved Alexa Voice Services (AVS) Systems Integrator, and we have effectively and creatively designed a range of commercial A/V, IoT and security products featuring Alexa. Plus, our extensive Amazon development experience ensures we can help you develop an Alexa-enabled device for the Amazon Sidewalk network. From the selection of microphone arrays to signal enhancement processing and acoustics testing, you can trust us to quickly and efficiently bring Alexa-enabled products that meet the challenges of noisy and acoustically difficult environments to market. If you’re looking for a voice systems engineering firm to partner with, contact Cardinal Peak!