FPGA is a field programmable gate array where static random-access memories (SRAM) based fabric replaces metal interconnections between logical elements. To understand how FPGA works, we must first understand how they were created.

A long time ago, in a galaxy far, far away, if you needed to develop an all-digital integrated circuit (IC) but did not have the budget for developing from scratch an application-specific integrated circuit (ASIC), you could settle for developing a gate array.

Contents

Complex History of Gate Arrays

A gate array was a piece of predesigned silicon from a third-party vendor, with a large array of memories, flip-flops and combinatorial logic elements (such as AND, OR and INVERTER gates) arranged in a huge repeating array of elements.

Since all digital circuitry basics can be condensed down to memory, flip-flops and combinatorial logic elements, it is possible, within the speed and size limitations of the gate array silicon, to create any digital circuit from a silicon gate array.

You would (usually) provide to the gate array vendor a schematic drawn from a library of carefully curated digital circuit elements, and they would compile your design into further-simplified digital circuit elements that matched the available elements already implemented on the silicon gate array. (For example, a NAND (not AND) gate in the abstract circuit could be partitioned into an AND gate followed by an INVERTER using the simplified elements found on the gate array.)

Once your more-abstract digital circuit had been compiled into a less-abstract circuit that matched simpler silicon gate array elements, a second compiler was run, which figured out how to connect together logic elements in the silicon gate array in such a way as to implement your circuit.

When this mapping function was completed, yet another compiler was run to figure out the minimum number of metal connections connecting the output of one such silicon gate array element to the input of the next silicon gate array element such that the overall result perfectly matched your intended digital circuit functionality.

To prove it, there were simulators that could run input-signal stimulus vectors and generate resulting output vectors on your original circuit. These same vectors could be tested against the circuit reimplemented on the simplified gate array elements, and if the results of the simulation were identical, you knew the implementation matched.

Then, yet another compiler was run to figure out how to connect wires to all of the necessary elements and to figure out how many layers of metal wiring would have to be added onto the top of the silicon gate array to implement the circuit.

At this point, the simulation model of the gate array could be further enhanced by modeling the delays in the wires incurred by the series resistance in the wire, the stray capacitance to all the nearby wires and thus the ‘RC’ delays injected into each wire.

Now the test vectors run in the simulation, which included the wiring delays, and then they are compared against the expected output vectors, thus proving your design would run at full speed in the real world.

The gate array vendor would then create multiple metal layer “masks” to implement your circuit and fabricate them onto the silicon die of their gate arrays, thus completely implementing your digital circuit for a fraction of the cost of a full-custom ASIC.

Along Came Field Programmable Gate Arrays (FPGAs)

Along Came Field Programmable Gate Arrays (FPGAs)

Why go into all this detail regarding gate arrays when we wish to understand the basics of a field-programmable gate array (FPGA)?

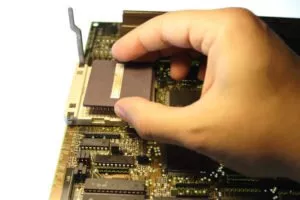

Simply put, a FPGA is just like an older gate array, but the metal interconnections between the gate array logic elements are replaced by a huge array of static random-access memories (SRAM)-based “fabric.” By artfully setting particular SRAMs to “1,” it causes the outputs of certain logic elements to become connected to the inputs of other logic elements. In this fashion, any digital circuit that could be implemented on a gate array could also be implemented on a FPGA, but by reprogramming the SRAM fabric, you could use the same FPGA to implement a myriad of digital circuits (one at a time).

FPGA Programming & Reprogramming

Indeed, since SRAM has no “wear-out” mechanism, the SRAM could be reprogrammed over and over to implement countless digital circuits.

Indeed, even the FPGA “ideal” simulation model (which does not represent the wire delays), and the ‘annotated’ simulation (which does include the effect of the RC delays on the SRAM ‘wires’), works just the same as on the gate array method.

But how do you know which SRAMs need to be programmed to “1” to implement your particular digital circuit? The short answer is: you don’t need to know. By passing your digital circuit through a series of compilers provided to you by the FPGA vendor, the compilers figure out which SRAMs should be set to “1.” They present this information to you in the form of a binary file, which you use to program the FPGA.

You will recall a huge benefit of SRAMs is that they have no wear-out mechanism. Unfortunately, they also have a huge downside. Every time you cycle power to an FPGA, the SRAMs must be programmed. Think about that. Every time your FPGA-based product is powered up, the SRAM inside the FPGA must once again be reprogrammed.

This generally takes the form of a small, reprogrammable nonvolatile memory, such as an electrically erasable programmable read-only memory (EEPROM), which is placed nearby to the FPGA and the FPGA uses to program its SRAM, every time power is applied anew. In fact, in case you ever want to change the functionality of the digital circuit inside your FPGA, you can reprogram the EEPROM.

Consider that. If you were to make the EEPROM reprogrammable (or replaceable) out in the field with a new binary file, then you could perform a field upgrade to the EEPROM, resulting in new hardware functionality in the field. But with this use case, you would want to consider authentication that the new binary file is unhacked — otherwise, hackers could take over the functionality of your product after a field upgrade.

So, the next time your boss points to the FPGA in your block diagram, or on your prototype, and asks, “What does this IC do?” You can look them in the eye and tell them, “What would you like it to do?”

Our FPGA Development Capabilities

Want to dive deeper into our signal processing and FPGA expertise? Check out our FPGA capabilities brief and blog on UVM and OVM in system and unit level verification. Reach out to our experts to discuss your project today.